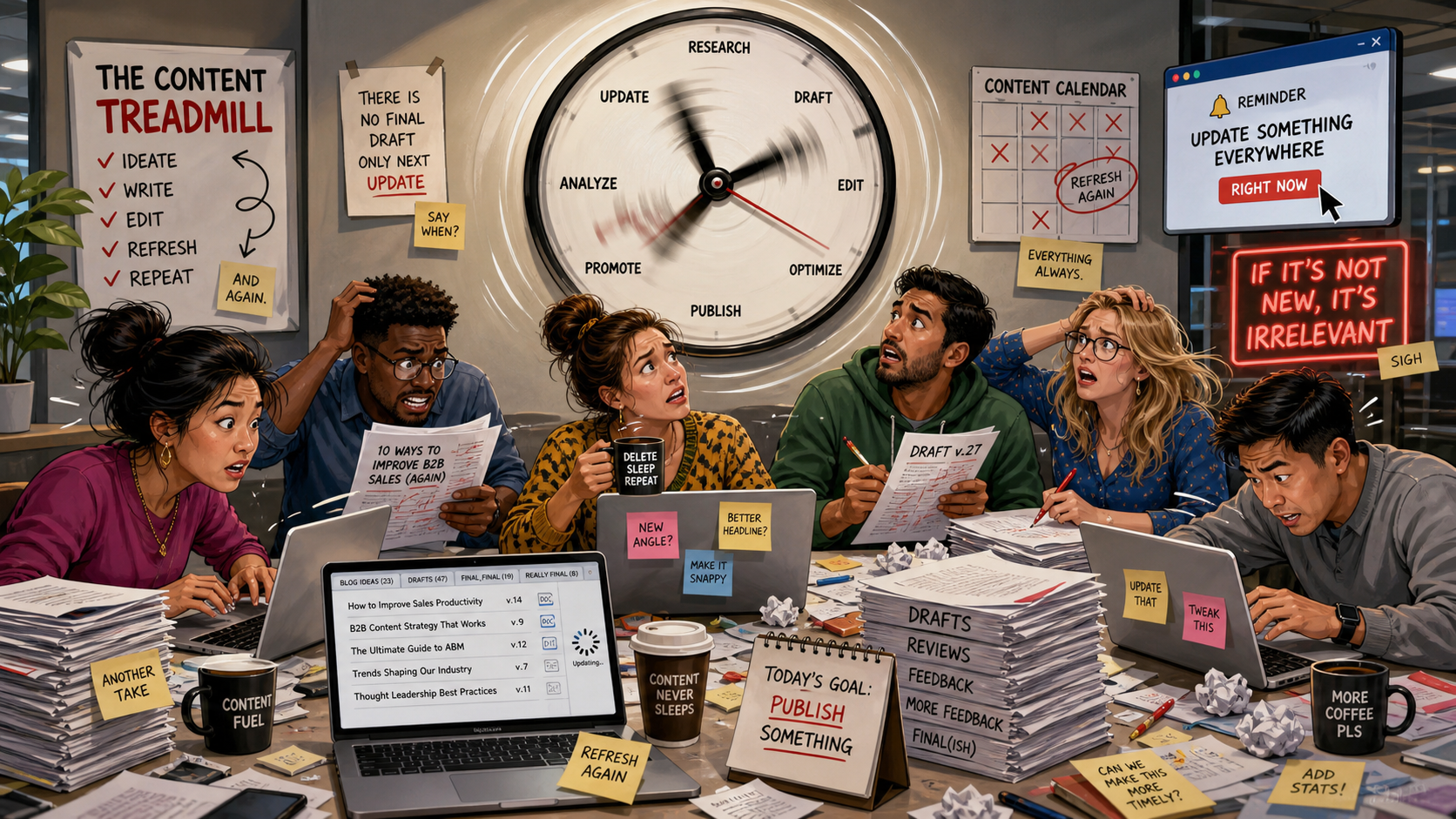

A B2B content lead at a mid-market SaaS company sets up a refresh schedule built around the 48-hour cadence advice making the rounds last summer. Two writers, six high-priority pages, every other day. The plan is rigorous on paper.

The reality, three months in, is that the citation needle hasn't moved, the writers are burned out, and the comparison guide that actually drives pipeline hasn't been touched in five weeks because it wasn't on the rotation.

The advice was bullshit. The data that's been published since says monthly is the right cadence on high-priority pages, and the verdict AI surfaces recommend is more stable than the surface itself.

What the rigorous data actually shows

Ahrefs analyzed the top 1,000 pages ChatGPT cited in September 2025. Of the 564 URLs where they could detect update dates, 76.4% had been updated within the last 30 days, and 89.7% had been updated in 2025. Pages updated 1-6 months ago accounted for 11%. Pages older than a year, just 8.9%.

The recency bias is real, well-documented, and ChatGPT-specific. (Ahrefs flagged the methodology caveats themselves: Wikipedia is 53% of the dataset, and "Last modified" timestamps sometimes reflect the date of the data request rather than actual content updates. Even excluding suspect timestamps and pulling Wikipedia out, more than 80% of cited pages still showed 2025 updates.)

Widen the lens, and the picture changes. Ahrefs' separate analysis of 17 million AI citations across seven platforms found that AI-cited content is 25.7% fresher than traditional Google organic on average, but the platforms diverge sharply. ChatGPT cites pages 393-458 days newer than what ranks in Google.

Google AI Overviews shows the weakest freshness bias of any major AI surface, citing pages that are on average 16 days older than what ranks organically. If your category is dominated by AIO traffic, the freshness conversation looks different than if it's dominated by ChatGPT.

Seer Interactive's log file analysis of 5,000+ URLs adds the cleanest single number for B2B planning: 65% of AI bot hits target content published in the past year, 89% within three years, 94% within five years. This is bot crawl behavior, not just citation behavior, and it lines up with what a sane refresh cadence implies. Monthly on the pages that matter most. Quarterly on category content. Trigger-based on everything else.

Nothing in this data supports a 48-hour cycle. The advice was telling you to operate at fifteen times the cadence the citation pattern actually rewards.

The verdict is more stable than the surface

This is the part most teams miss, and it's the reason daily refresh feels productive while producing nothing.

SparkToro and Gumshoe ran 2,961 prompts in their January 2026 recommendation-stability study. The probability of getting an identical list twice from the same prompt was less than 1%. The recommendation lists shuffle every time. But top brands in narrow categories still appeared in 70-90% of runs.

The deck reshuffles, the same cards keep coming up.

AirOps' analysis of 45,000+ citations found that only 30% of brands sustained visibility from one run to the very next. Just 1 in 5 brands maintained visibility from the first run through the fifth. The single-snapshot view of AI search is misleading by structural design.

(Worth noting: AirOps also found that brands earning both a citation and a mention in the same response were 40% more likely to resurface across consecutive runs than brands cited only as a source link. Mention plus citation is more stable than citation alone.)

Ahrefs' study of 43,000 AI Overview keywords found something more counterintuitive. Between consecutive AI Overview captures, the text changes about 70% of the time. Cited URLs change 46% of the time. Entities mentioned change 54%. But cosine similarity between consecutive responses (a measure of how close two texts are in meaning, where 1.0 is identical and 0 is unrelated) sits at 0.95. The words shuffle. The URLs churn. The answer doesn't move.

Read those three studies together and the implication is hard to miss. The AI surface is volatile. The recommendation underneath the surface isn't. A refresh cadence calibrated to the volatility (daily, every-48-hours, the panic schedules consultants were selling) is responding to noise. A refresh cadence calibrated to the recommendation (monthly, on the pages that drive consideration) is responding to signal.

How the 48-hour advice survived this long

The advice got repeated because it sounded like SEO advice. SEO trained a generation of marketers to believe more frequent equals better.

AI citation doesn't work that way. There's a floor below which staleness gets penalized, and a ceiling above which additional recency stops adding much. Daily refreshes overshoot the ceiling by a factor of fifteen, burn out writers, and produce cosmetic edits that AI systems can detect anyway.

A refresh isn't a timestamp change, but rather an update to claims, examples, comparison depth, or buyer-objection coverage. If the page isn't more useful afterward, it wasn't refreshed, it was cosmetically disturbed. (Google's John Mueller has been explicit that artificially refreshing publication dates without substantive change is treated as a manipulation signal in Google's own ranking systems. The directional read for AI citation is the same: cosmetic edits don't move the needle.)

A confession worth making: even my own materials had to be cleaned up before publishing this piece. Stat misattribution propagates through secondary sources, and primary sources need to be verified before any number gets repeated, including ones that came from research I trust.

The field is moving fast enough that getting this right requires more discipline than instinct, not less. The honest version of GEO advice in 2026 is that we finally have enough data to refute our own folk wisdom. Refresh cadence is the cleanest example.

What B2B teams should actually do

For a serious GEO program, a defensible refresh framework looks like this:

Monthly cadence on high-priority pages. Comparison guides. Pricing and packaging. Integration documentation. The buyer-stage pages that drive pipeline. These are the assets where freshness compounds with intent match and earns citation lift across ChatGPT and Perplexity specifically.

Quarterly cadence on category education and thought leadership. Foundational explainers, definitive guides, the content that builds authority signals over time. The freshness window is wider here because the content is meant to age into authority, not respond to news.

Trigger-based refresh on everything else. Product launches, competitor moves, new compliance requirements, customer wins worth naming, regulatory updates in your category. These don't run on a schedule. They run on events.

A platform-specific note for sophisticated readers: if Perplexity is a priority surface for your category, monthly is closer to a floor than a ceiling, because Perplexity weights recency more aggressively than ChatGPT does. If your category is dominated by AI Overviews, quarterly may be sufficient on most pages because AIO has the weakest freshness bias of the major AI surfaces.

The implication for resourcing is the part the 48-hour advice got most wrong. A B2B content team running a serious GEO program needs one writer dedicated to refresh on a recurring schedule, not three writers on a daily treadmill. The wrong cadence didn't just produce wasted effort. It produced the wrong staffing model.

(For the broader workflow this fits inside, the tier-one/tier-two/tier-three content model in Cited describes how refresh, gap, and authority content production should be allocated against measurement signals.)

Boiling it down

The Ahrefs ChatGPT Top 1,000 study, the 17M citation freshness analysis, Seer's bot crawl logs, the SparkToro recommendation-stability work, the AirOps brand persistence numbers, the AIO change-rate research: none of these were available when the 48-hour advice started circulating. The advice wasn't malicious. It was confident in the absence of data. The field has the data now. The advice should be retired.

The verdict barely moves between runs. Your refresh schedule shouldn't either.